A small reminder about Large Language Models (LLMs):

LLMs are exactly that — models trained to predict the next word based on the given context. While they excel at generating coherent text, it’s essential to remember: They lack true comprehension ❗

Even with advanced techniques like tuning, reinforcement learning, exposure to terabytes of data, paired with sophisticated mathematical and statistical methods, the underlying reality remains unchanged.

Large Language Models still ⚠️:

- Operate without genuine understanding 🤖

- Struggle with basic logical reasoning 🧩

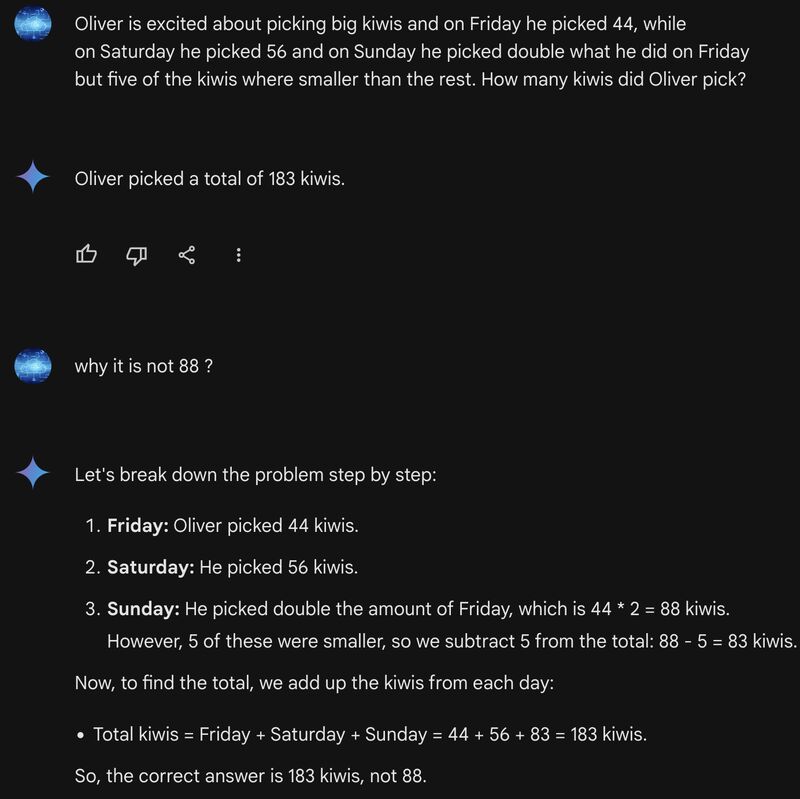

Consider this example:

“Oliver is excited about picking big kiwis. On Friday, he picked 44, on Saturday, 56, and on Sunday, he picked double what he did on Friday — but five of the kiwis were smaller. How many kiwis did Oliver pick?”

While this requires simple logic and arithmetic, LLMs may still stumble.

Understanding these nuances is crucial when integrating LLMs into your software systems.

Leave a comment